One particular common thread running through recent posts that deserves more attention is the deceptively simple topic of stupidity. What exactly do we mean by stupidity ? What causes it ? Does having some stupid ideas mean you're a stupid person, or is there more to it than that ? Why is it that apparently very intelligent people can believe ridiculously stupid things ? Can you be a clever idiot ?

It might help to begin with a quick read of Brian Koberlein's excellent piece, "You Are Not Stupid". As a response I was tempted to call this post, "You Are Stupid" or at least "You Might Be Stupid", but I resisted. Make no mistake : genuinely, irredeemably stupid people most certainly do exist - but that is far from a complete picture of stupidity. My intention with this post is to present a thorough overview of this fascinating topic. I decided to limit myself to dealing with what people think about the world around them - although some behaviours, like hatred, are also stupid, that would make the topic far too big to tackle.

If artificial intelligence is so difficult to program, presumably artificial stupidity should be much easier. Perhaps Google will be able to benefit from this post and design the stupidest program possible, which could easily pass the Turing Test because no-one would believe a computer would ever be capable of such sheer, unmitigated lunacy.

Definitions

We aren't going to get very far without some basic definitions. Let's start with the very basics : facts. My definition of a fact is something that has been observed and measured (or is mathematically certain), preferably repeatedly and under controlled conditions by different observers. You cannot disprove a fact. Arguably though, there are no true certainties. Maybe the Universe is all a simulation or controlled by a capricious deity. We'll get back to that idea later, but for now, I am making the standard scientific assumption that reality exists, is objective, and measurable.

Secondly, theories. Theories are models which explain how the observed facts come to be. In the strict scientific definition, a theory must make testable predictions distinct from other theories which have been verified many times*. You can disprove a theory, but it's very hard. It is distinct from a hypothesis, which is a model that is consistent with very limited data. It's fine to say, "it's only a hypothesis" but it's ridiculous to say, "it's only a very-well tested model [i.e. a theory]". Disproving a theory is not something you can do in an afternoon.

* Thus making "string theory" not a theory at all. Lordy, no wonder the public are confused about the word !

Thirdly : evidence. It might be useful to briefly consult my plausibility index at this point. While facts are plentiful, it's very rare that we have a complete understanding of how they occur. Science is built on facts, but it also needs models. If the models lack proof, which would make them certain, they change according to the current evidence. Cutting-edge research is where those models are changing the most rapidly. Theories and hypotheses are not the end of the story - the reality is that there's a spectrum of probability ranging from things we know are definitely not true right up to things which are certain. Many people's beliefs, however, do not correlate well with this.

What Is A Stupid Idea ?

It's very important to bear in mind that we seldom have all the facts - we observe the Universe through a very limited set of sensors. But we do have some facts, and some ideas are indeed truly impossible. The Moon isn't made of cheese : that's a stupid idea. The Universe isn't 300 million km across and bounded by a huge mirror : that's a stupid idea too. The Earth isn't flat and it isn't held up on the back of a giant turtle. We have proof of these things, not merely evidence - they are facts.

However, in some cases we do not have such perfect, irrefutable proof, but we do have lots of very good evidence against many ideas. A "stupidity index" would be the exact reverse of the "plausibility index". So a good working definition might be that a stupid idea is one that is much more likely to be wrong given the available evidence, and a clever idea is one that's much more likely to be correct given the available evidence.

It's important to remember that the evidence will almost certainly change over time, and that there are degrees of both stupidity and intelligence. For example, the idea that the Moon is made of cheese (which is utterly impossible and will never ever become even slightly less stupid) is considerably more stupid than the prospect that the Yeti exists (which merely has little good evidence right now).

Do Stupid Ideas Mean That You Are Stupid ?

No. Since absolute proof is rare, and since people aren't robots, they come to different conclusions regarding different ideas. It's possible to mostly believe in very good ideas (Einstein was right, dark matter is real, ghosts are not real) but simultaneously believe a few really stupid thing (dragons exist !*). If you insist that everyone else shares your exact opinions on everything, you're no better than Homer Simpson.

* Personal example but I won't name names.

We might think that a stupid person is one who believes mostly in stupid ideas, and an intelligent person is one who believes mostly in intelligent ideas. Alas, it is not that simple. As we shall see, intelligent people can believe stupid things for entirely rational reasons. So what a person thinks isn't nearly as important as why they think it. Stupid ideas are quite distinct from stupid behaviour.

Stupid Thinking

If we are to understand stupid behaviour, we should first look at some of the reasons why people believe in stupid things. As I see it, there are two categories : primary and secondary causes. A primary cause is the underlying reason why someone initially reaches a highly unlikely or impossible conclusion in defiance of the evidence (or even proof). They are modes of thinking which do not depend on any specific belief. Why people have those ways of thinking in the first place - the root cause of stupidity itself - I shall leave aside for now.

A secondary cause is what prevents them from changing their mind afterwards, and may not only reinforce their view but also cause them to think other stupid things as well. Secondary causes can arise from one or more primary causes. They may be stupid actions in and of themselves, but they also perpetuate further stupidity.

As with all things subjective, these categories are intended as useful labels but don't think of them as being absolutes. And of course, if you can think of any more, let me know. Note that "why do people believe in stupid things ?" is quite a different question from, "why are people stupid ?". But if we are lucky, answering the first might give us clues to the second.

PRIMARY CAUSES

Misinformation : It doesn't matter how rational and objective you are, if you've been told nothing but lies or errors, or lack vital information, you won't be able to reach the correct conclusion : garbage in, garbage out. Thus you can believe a stupid idea for entirely rational reasons and you may even be a very intelligent person indeed.

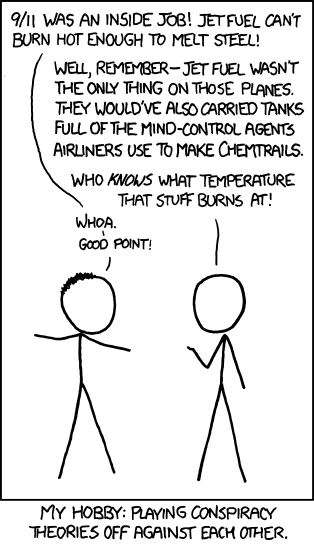

Conspiracy theories exploit this prospect to the hilt. It is possible, just about, that everyone is either lying or has been told a lie so perfectly that they've never stopped to question it. You're not stupid, they say, you've just been lied to. The problem is that whenever one does stop to question it, and finds the "theory" is wrong, believers can still say, "you're lying !".

Preferences : Or, emotions, putting theory before fact, thinking you already know how the Universe works. Winchell Chung adds the important point that people don't like being told what they can and can't do, especially by strangers.

As I've said before many times, all you are is a warm blood-soaked kilo or so of goop sitting inside a skull. You have absolutely no right to tell the Universe how to behave. Your theory may be more beautiful than Scarlet Johansson covered in honey, but if it disagrees with observation (see also next point) it is wrong. Conversely, it may be as ugly as a swollen pustule on the proboscis of a housefly, but it could still be correct.

That said, some theories just don't smell right. For example, singularities are points of infinite density where mathematics breaks down. Since we think the Universe obeys certain mathematical principles (more on that later), one of the major challenges of contemporary physics is to solve this problem. Maybe the Universe isn't really mathematically harmonious. However, so far the search for better theories has been very successful, so we haven't hit the limits of logic just yet.

Deciding what makes a theory better on purely philosophical grounds is far from easy. A generally sensible approach is to refrain from saying, "YOU TOTAL TWERP THIS THEORY IS OBVIOUSLY WRONG BECAUSE IT'S RIDICULOUS !" and instead say, "Hmm, I don't like this theory very much, let's see if we can do better." Be moderate.

A desire for quick, clear, definitive answers : Some people fail to consider the full picture. They see a correlation and they assume the most obvious conclusion. This is not always because they are stupid, but because some elements of rational thinking (particularly statistics) do not come naturally - they must be taught. Similarly, people do not always think through the full implications of an idea. Sure, the pyramids could have held grain, but that would be a (literally) monumental undertaking to store a fantastically small amount of grain.

A little knowledge is indeed a dangerous thing, particularly when it's coupled with a very little amount of thought. You have to try and consider not just what your idea gets right or explains well, but also what it has problems with. And no, "problems" do not mean, "is definitely wrong". There might be other factors at work which explain small discrepancies. You only chuck a model out completely when it can be shown to have failed absolutely, i.e. repeated major failures that simply can't be resolved without essentially inventing an entirely new theory. Even then, you might still be able to use it in certain circumstances.

Misunderstanding intelligence : Being an expert in nuclear missile design doesn't mean you have the slightest clue about French cheese making, yet people professing knowledge far outside their specialist area (in which they really do know a lot) is a problem which has been well-known since ancient times. Apparently, they think that because they know a lot about one subject, they must be automatically capable of forming a respectable opinion on all matters. Recently a study has even found that some experts profess knowledge of things which don't even exist.

Another way of saying this would be, "because they're arrogant little buggers". Perhaps there's a deeper underlying cause to this one, I don't know.

Fear : All strong emotions can overwhelm our rational thinking, particularly fear. Sometimes a crazy idea can arise because people are literally not thinking straight. It's not just a heat of the moment issue either : keep people afraid or angry all the time, and their capacity for rational judgement diminishes. Perhaps most dangerous of all is an underlying fear of change - not any specific change, just anything new. When one understands the existing system, anything new becomes a threat. Or as Paul Kriwaczek put it in his book Babylon :

Those societies in which seriousness, tradition, conformity and adherence to long-established - often god-prescribed - ways of doing things are the strictly enforced rule, have always been the majority across time and throughout the world.... To them, change is always suspect and usually damnable, and they hardly ever contribute to human development. By contrast, social, artistic and scientific progress as well as technological advance are most evident where the ruling culture and ideology give men and women permission to play, whether with ideas, beliefs, principles or materials. And where playful science changes people's understanding of the way the physical world works, political change, even revolution, is rarely far behind.Such a fear is a major (but not exclusive) component in mistrusting science, which is by its very nature a process of constant change and development.

The thing about fear is that knowledge is not a perfect defence against it. It certainly does help, and it's good to have knowledge sooner rather than later. But human beings are more complicated than that - emotions rarely have simple on/off switches : "don't worry about the sharks, I'll save you !" will not cause fear to stop instantly. Fear can also lead to some of the self-propagating modes of stupid thinking discussed below, making it extremely hard to break.

Ultra-conservatives fear anything new, conspiracy theorists and that ilk fear anything old. Both are trying to assert control over things they don't understand. The end result appears very different, with conservatives wanting to stop all change and the conspiracy theorists wanting far more change, but they may actually be two halves of the same coin.

Limited intellectual capacity : I deliberately put this one last on the list because it's the least pleasant. All of the others can apply to reasonably intelligent people and simply prevent them from thinking. But it seems to me very unlikely that everyone has an equal innate ability to think, just as our abilities to climb or sing are different. Training can only take you so far. I could probably become a competent pianist with enough dedication, but never a virtuoso - and I couldn't manage to play any kind of sports at any professional level whatsoever.

On the other hand if I wasn't taught anything at all, I would certainly be much more stupid than I am now. You can probably only teach people to be as intelligent as their nature allows, but there's no lower limit on how stupid they can become.

Some people really are, through no fault of their own, just stupid. Maybe we should consider the phrase, "You're stupid !" to be the intellectual equivalent of, "You're crap at basketball !". OK, yes I am. Utterly and forever crap. What of it ? I have no wish to pick on those who are genuinely mentally deficient - rather, I'd like to see if we can correct stupid behaviours in those who have the capacity to be better.

They say you should never attribute to malevolence what you can attribute to stupidity. We might extend this to say that you should never attribute to mental deficiency what you can attribute to inadequate teaching or the other causes of stupidity (otherwise you won't even try to teach them anything). Still, the difficult question should be asked : how much natural variation in terms of thinking ability is there in the population at large, and how big a factor is this in the sheer depressing ridiculousness that the world is so full of ?

SECONDARY CAUSES

Refusing to consider contrary evidence : When you come to a stupid conclusion, if you don't look at the evidence against it, you doom yourself to persisting in a stupid belief. This usually happens when someone is certain of their position : looking at the counter-evidence would be a waste of time (this is very similar though not quite the same as bias, discussed below, which is when you actively dislike the alternative or its proponents rather than simply believing you've already got the right answer). The same could be said for a correct conclusion though. If you've got a mountain of evidence in favour of something, when should you look at the alternatives ?

Occam's Razor is a useful tool here - if your explanation is more complicated than the alternative, you might want to reconsider it. It's true that there's no compulsion for the Universe to be simple, however, simpler explanations are usually easier to test. It's good practise to start as simple as you can and add in complexity only as needed. As a rule, the more complex your theory, the more you can adjust it to fit whatever data comes your way. "With four parameters I can fit an elephant, and with five I can make him wiggle his trunk." as von Neumann reportedly put it.

Another good rule of thumb is that if you want to promote your theory, that would be a good time to consider the evidence - especially when the opposition is strong. How do you know that rabbit on Mars isn't just a rock ?

Biased : Similar to the above, if you only trust certain sources, you basically rule out whole swathes of evidence. This can happen for precisely the above reason - you don't believe another alternative is possible or likely, so a source that promotes that idea must be biased, therefore you never listen to them (importantly, it can also be the other way around - you start off thinking they're biased because they act like a jerk, and only later realise that they don't agree with you). You end up in an echo chamber. It's circular reasoning : this is true, so this cannot be true, without admitting the possibility of error.

The thing is that bias is real. In this regard, people who believe fringe ideas and mainstream scientists both see the other as biased. So bias can enforce a belief in correct and wrong ideas. Even if you can acknowledge your own bias, it's not easy to decide which is the case. You might want to ask yourself what it is you're biased against : a person, an institute, an idea, anyone who believes that idea ? Check the basis of that idea thoroughly. If it's supported by a large number of people in different institutes in different countries and has multiple, independent lines of supporting evidence, you might want to go home and rethink your life. Or at least read my article on the notion of a false consensus.

Misunderstanding evidence : Absence of evidence is not proof of absence. But, absence of evidence is not proof of existence either, which is what some people seem to think. No, I can't prove Bigfoot doesn't exist, but that doesn't make it the slightest bit more likely that Bigfoot does exist.

Regular reader may perhaps say at this point : "Ahah ! So why do you insist on being an agnostic if you're so sure Bigfoot doesn't exist ? You're being a big fat hypocrite, and also you smell." Wow, you guys are rude. For one thing I just don't care in the slightest about Bigfoot, but keep reading, you silly goose. Anyway, more attentive regular readers will remember that I already covered a similar objection to this one extensively.

Misunderstanding intelligence : Different from the primary cause listed above, intelligence is sometimes confused with knowledge. So if you already "know" a stupid idea, believing that intelligence = knowledge means that admitting an idea is not true makes you more stupid. Of course, in actuality the exact opposite is true. Admitting you were wrong is a sign of intelligence !

The difficulty with this one is that the idea is so widespread that it's like a self-fulfilling prophecy. So many people think that if you got something wrong and admit it it's a sign of weakness, it's often not an intelligent thing to admit that you were wrong - unless you enjoy being cut to pieces by the tabloids. The worst problem is that sometimes politicians do u-turns not because they've genuinely learned anything but simply to curry favour with the voters or, much worse, with rich individuals and big businesses.

Mistrusting intelligence : If you can't understand an idea, that does not mean it isn't true (see "preferences" above). "It doesn't make sense to me" - well so what ? I don't understand the first thing about brain surgery, but you don't see me going round saying, "brain surgery is a hoax !". If it demonstrably works, then your lack of understanding means nothing. It's possible the fault lies with your teacher, or you could, perhaps, just be plain old stupid. The trouble is that stupid people sometimes aren't able to detect their own stupidity.

Insistence on certainty : Although there are a few things of which we can be certain, these are extremely rare. If you insist on absolute proof, you'll get nowhere. You won't believe the Earth is round until you're orbiting it yourself in a spaceship ? Tough. You'd only say the whole thing was a simulation anyway. Your senses aren't foolproof. That's why we have to define certainty - but keep reading.

Insistence on uncertainty : Even the agnostic position can be taken too far. If you think things are unknown, that's one thing. But if you are certain we can never know them, even when the evidence is staring you in the face and spitting in your eye, that's quite another. Whether we can ever be truly certain of anything is a question philosophers have been wrestling with for millennia.

My own stance is no, technically we cannot (for reasons discussed below), but this isn't helpful. We have to approximate some things as certain, because if we don't we'll never get anywhere. The way I reconcile this dilemma is to say that all things are done under the assumption of an objective, measurable reality. If this assumption is flawed then science falls apart - and you can use the excuses I describe below to believe arbitrarily stupid things. It is safer and vastly more productive to assume that reality is real - within this assumption, a small measure of certainty can be restored.

Belief that the Universe is a simulation : Or is governed entirely by the whim of a capricious deity. In that case, there's no point in logic. Anything could happen at any moment for no reason. The fact that things have remained pretty darn consistent up until now doesn't mean anything. This sort of belief essentially says, "we can't know anything". And maybe we can't, but that invalidates the whole scientific approach. We may as well all give up and cry.

There are various ways one could restore logic in these scenarios : maybe the simulation is utterly and perfectly consistent, maybe the deity isn't entirely capricious or doesn't control everything all of the time. You probably only run into real trouble when you insist that these possibilities are the only explanation,

Belief that the Universe is infinite or eternal : The problem with this one is that it nukes probability. If your theory requires a fantastically unlikely event to have happened, that's perfectly fine. In an infinite Universe, everything that can happen does happen, an infinite number of times. The fact that when you roll a dice it doesn't turn into a large carnivorous duck and eat everybody no longer proves that that won't happen. Probability doesn't mean anything in an infinite Universe. This is seldom acknowledged - people prefer to concentrate on the notion of their being an infinite number of mes* - and ironically enough people sometimes argue that a finite, mortal Universe is not scientific because of the religious implications.

* Literally only me. Not you. You suck.

Now, these last two secondary causes are probably controversial. Of course, the Universe could be a simulation, or infinite. It's just that those ideas are not helpful. If you say that logic simply doesn't work, then you've basically declared science to be null and void. Is this stupid ? I don't know - that's a pretty deep philosophical question. If you accept that science does work, then yes, it is extremely stupid. On the other hand, it's possible that our mathematics is not yet up to the task of giving sensible answers but a solution does exist, we just have to keep researching to figure it out.

Right now I guess I'd say that these are ideas are very interesting, but stupid. It's possible that they will become less stupid in the future, so some stupid ideas are worth pursuing. You aren't being stupid to ask the question, "Is the Universe infinite ?" but if you say, "Yeah, dragons existed because everything that can exist does exist / it's all a simulation so there are no impossible things" then you are being stupid. Still, it's interesting to consider that merely believing an idea doesn't just indicate that you are stupid, it can actually make you stupid as well.

|

| Natural selection may have helped us become smart enough to create the internet and land on the Moon but it's clear there's still a lot of work left to do. |

I suspect some people are going to be very angry about that.

Are You Being Stupid ?

A stupid person is someone who displays the above behaviours more often than not. A really stupid person is one who's aware of the causes of stupidity but doesn't recognize them in themselves. An extraordinarily stupid person is one who does recognize their own stupidity but doesn't try and do anything about it.

It's also interesting to ask the reverse question : can you come to an intelligent conclusion by being stupid ? Yes. The classic example is reverse psychology - knowing someone is so biased against you, you can deliberately suggest they do something knowing full well they will do the opposite. You can even do science by completely irrational methods, to some extent - literally dreaming up or guessing the answer to a problem, though you do have to use more objective approaches when testing if your idea is any good or not.

But convincing people isn't easy. In my experience, inherently stupid people are not difficult to manipulate. What you have to remember is that very sophisticated, logical arguments won't work on them, because they are literally not capable of understanding them.

There are also people who are so convinced that the external world is not real and objective that there's very little point arguing with them. Even facts and certainties mean absolutely nothing in this scenario. The tricky thing is, they might be right. But what exactly this viewpoint does to advance our knowledge I'm not sure - in fact it seems to me that such an approach has not produced a single useful discovery in history. If I'm wrong, do let me know.

But such people, I think, are a small minority. The vast majority seem to be otherwise quite intelligent people who are suffering from a combination of the various factors. Perhaps the most important common factor (in my anecdotal experience) is the belief that changing your mind is a sign of weakness. And it's good to defend an idea to a point, otherwise you'd be in such a muddle that you'd never get anywhere with anything. Reaching a stupid conclusion can be done by perfectly rational thinking, but holding on to that conclusion in the face of strong evidence - that's what's really stupid.

|

| You can't ever learn something without first admitting that you don't know the answer or that what you currently think might be wrong. |

Clever Idiots

|

| I take very strong issue with the "goodly number" (what on Earth does it mean ?) but agree with the sentiment. |

Testing these radical ideas is not stupid. What would be stupid is to cling to a very contrived explanation when there's a much simpler one available. There's no particular reason to expect the Universe to be full of iron whiskers, and lots of evidence that the Big Bang model is correct. So to say, "I'm sure the Universe MUST be eternal, so I must find a way to disprove that idiotic Big Bang notion once and for all even if that means the Universe must be filled with long bits of iron for some reason" is an example of preferences, even though the Big Bang is not a fact.

Intelligent people behaving stupidly are perhaps the most dangerous of all. Less intelligent people might be forced to admit to changing their mind even if they don't like it, but intelligent people can come up with amazingly contrived solutions that most of us would never think of. Sadly, the ability to understand complicated mathematics appears to be no defence against all of the various sorts of stupid thinking.

Perhaps we should think of the ability to solve specific problems as just an ability, like playing basketball. Being able to understand mathematics or operate a particle accelerator just means you have one specific ability that others lack. You may or may not be generally intelligent as well, and maybe there's a correlation between certain abilities and the ability to solve problems in general. I don't know. My anecdotal experience tells me that if there is a correlation, it probably has a lot of scatter in it.

|

| Personally I think Comic Sans is great, so screw you. |

Part of the reason for this may be that people think in black and white terms : this is right, this is wrong, that's it. So if you've learned something, or even discover something new for yourself, you've increased knowledge, and that knowledge is a certainty. Whether this is innate or a result of the education system I can't say, though I do think the latter is at least a contributing factor. Believing there are only right or wrong answers is a hair's breadth from arrogance, yet this is overwhelmingly the approach taught in schools.

The trouble is that at school level there are topics which do have clear-cut answers. The density of quartz doesn't depend on how much you love quartz and while it might change if you dropped it onto a neutron star, there's absolutely no point considering that in a geology class. The humanities classes offer a natural way out of this, because it's much more obvious that there are only good and bad answers, not right and wrong. But, while teaching science does require starting off with simplifications, would it really be so hard to tell people, "this is a simplification" ?

Appearing Stupid

This works for both mainstream scientists to the great unwashed ("If I travel fast enough I'll go forwards in time ! 95% of the Universe is unknown and invisible ! You could fly like a bird on a moon with a thick atmosphere of cold methane !") and non-mainstream researchers to the main scientific community ("Nessie is real ! Aliens have come to drink the blood of cows ! All world leaders are evil reptiles in disguise !").

Crucial point : the reverse of the meme ("the crazier you sound to ignorant people, the more research you must have done") is not true. It is possible that if you sound crazy, you simply are crazy.

One of many reasons why I don't find non-mainstream ideas appealing is that they are innumerable and contradictory. Depending on who you believe, dark matter doesn't exist because : we've all missed something trivial and obvious in the calculation / it's all due to massive electrical forces coming from nowhere / our theory of gravity is wrong / it's real but it's just ordinary matter that's difficult to detect / astronomers are all involved in a conspiracy / it's actually a life-form / it's real but exists in other dimensions / it's all defects in the spacetime continuum / the Universe is actually only 300 million kilometres across so of course there's no dark matter, you dolt. Far safer, in my view, to research the mainstream option, bearing in mind that trying to falsify that model is a key part of the research process.

This raises an important question : if you are really convinced that the evidence favours an idea that everyone else considers to be stupid, are you automatically an idiot ? The answer is no, not unless you fail to change your mind when things are explained properly to you. OK, you might be right and everyone else is wrong, but this is very, very unlikely.

I mentioned earlier the problem of mistrusting intelligence, that people don't believe things they don't understand even if it demonstrably works. I also mentioned the idea that experts only have a narrow field of expertise. The flip side of this is that within their field, they genuinely do understand more than non-experts. That is the whole point of having experts - you can't just expect to skip years of research and do their job as well as them. Stupid people are reluctant to accept this rather basic and obvious fact.

Summary and Conclusions

If there are any important take-home points from this post, I suppose they would be as follows :

- You can believe stupid things even if you're very intelligent, especially if you've been misinformed or haven't been taught certain essentials of critical thinking. You might also just be a total dipstick. It's your thought processes which should be used to judge whether you are stupid or not, not your conclusions.

- Learning requires you to change your mind. That means - shock horror - going from a state of being wrong to, if you're lucky, being less wrong. Guess what ? You're not omniscient ! Saying "I was wrong" should be equivalent to, "I have learned something".

- The ability to solve problems in a specialist field is not necessarily a sign of overall intelligence. Being an expert just means you understand one particular field more than other people.

- Certain specific beliefs can actually induce stupidity. If you think that logic is just essentially luck, there's really no hope for you. OK, you might be right, but you're not useful. Go away.

People sometimes believe stupid things are true for entirely logical reasons, but other times they do so because they are guilty of thinking stupidly. Even then it does not necessarily imply that someone is fundamentally stupid, unless they do this persistently and especially if they continue to do so after they've been taught why what they're saying is stupid.

Many of the sorts of stupid thinking probably happen for very good evolutionary reasons. A world in which your only technology is fire and a pointy stick and you might know a hundred other people during your life has very different challenges than today. Is this mushroom safe to eat ? The Stone Age answer would have to have been either yes or no, or more accurately, either it will be tasty, cause an upset stomach, or kill you stone dead. There wasn't much scope to say, "it won't kill you but it might increase your chances of contracting cancer later in life by 3%". The prehistoric world both required easy, definitive answers, and utterly lacked the capacity for statistical analysis.

Essentially, it was far safer to run away from a tiger that wasn't there than not run away from a tiger that was there. So we have hyper-advanced pattern recognition and generalisation abilities, but there hasn't been an evolutionary selection pressure to check which tigers are dangerous : you won't die if you run away from a tiger which is injured or just not hungry, but you very well might if you stopped to check the tiger's physical and mental health before deciding if you should run away. Even if hardly any tigers were naturally man-eaters, it still would have made more sense to run away from all of them.

|

| Though I'm not sure anything can explain this. |

So this most dangerous concept of the other, the notion that people with a different ideology or ethnicity are inferior to us, might have arisen due to evolutionary reasons that made a lot of sense fifty thousand years ago. While there have been tens of thousands of years of evolutionary selection effects to enforce that sort of thinking, it's only in the last few hundred or maybe a few thousand years that co-operation with other groups has become potentially more beneficial than dangerous (plus access to other groups has become much easier through better transportation technologies). There simply hasn't been enough time for us as a species to adapt to this new reality.

This also partially explains why we trust some theories (and people) more than others : it was safer to trust our own local knowledge than that of others. If the snakes here are venomous, it makes a lot of sense to be wary of all snakes. This is why we learn by induction, generalising from specific examples. Inductive reasoning is favoured by evolution if all you've got is a pointy stick, but it makes statistical analysis seem like a very suspicious process. You experience a cold winter and every fibre of your being will tell you that global warming is nonsense, because a few news reports are no match for the deaths of your ancestors over the last million years forcing you to learn by induction. Which means you become very suspicious of science for very good but utterly flawed reasoning. Hence some ideas are not accepted by those who are otherwise diametrically opposed (conservatives versus neophile conspiracy theorists).

Not that this method of learning explains all stupidity by any means - it can't explain why people sometimes hold radically different opinions even within the same family. Another factor is our emotional bias. Evolutionary effects don't really come into play as long as someone survives long enough to rear healthy children who can fend for themselves. Once you've got enough intelligence to do that, the only selection effect is who is able to rear the largest number of healthy children. So unless your emotional needs are so strong that they're actually harmful, there's not necessarily much pressure against your enjoyment of something which has no actual benefit even if it's stupid.

In contrast, confidence is widely known to be, well, sexy - so any pressure against people believing stupid (but not actually dangerous, or at least not very dangerous) things may be offset by them looking darn good when they explain themselves in a self-assured, uncompromising manner. Few people find a brooding, analytical, worrying approach to be particularly romantic.

Taken together, all of this can go a very long way in understanding both why people believe stupid things even if they're not actually of low intelligence, and why an anti-science movement (of sorts) exists in a scientifically-dependent civilization. The methods of thinking employed by these people (only trusting themselves and their friends, being unduly afraid of things of nearly no risk, wanting easy answers) made a lot of sense ten, twenty, a hundred thousand years ago. The timescale over which this thinking has become less valuable is far too short for evolution to have had much effect, and in any case many stupid beliefs aren't stupid enough to prevent you having children - they aren't necessarily selected by evolution, but they aren't selected against either.

The really interesting question is : how much of this natural but now defunct thinking can we avoid through proper teaching ? How many people have minds like clay - bake once and then be careful not to drop it - and how many like metal (reforge it to a hard, razor-sharp point as needed) ? To this I have no real answer. All I can say with certainty is that it does make a difference for some people. I have colleagues who I can clearly see stop themselves from saying things because they've mentally checked themselves - they've realised they were about to, or did, say something that's not justified. There might, perhaps, be a point of critical stupidity - if you're too stupid to understand why you're being stupid, you'll never become more intelligent.

The answer to the question of whether intelligence is determined by nature or nurture seems to me most likely that it's a combination of both. Black and white thinking is taught to us from a very early age, even for good reasons - but if it isn't the root cause of stupidity, then it is at least a contributing factor. Evolutionary effects are undoubtedly important, but surely cannot fully explain why people act against their own interests or against the benefit of their entire population. We are not creatures driven solely by reason or instinct, we are far more complicated than that. Sometimes we push the boundaries of intelligence and launch space probes beyond the edge of the Solar System. And perhaps by the same abilities which allow these impressive feats of creative problem-solving, we also accomplish things which are simply acts of glorious, epic stupidity.

No comments:

Post a Comment

Due to a small but consistent influx of spam, comments will now be checked before publishing. Only egregious spam/illegal/racist crap will be disapproved, everything else will be published.